This AI used GPT-4 to become an expert Minecraft player

AI researchers have built a Minecraft bot that can explore and expand its capabilities in the game’s open world — but unlike other bots, this one basically wrote its own code through trial and error and lots of GPT-4 queries.

Called Voyager, this experimental system is an example of an “embodied agent,” an AI that can move and act freely and purposefully in a simulated or real environment. Personal assistant type AIs and chatbots don’t have to actually do stuff, let alone navigate a complex world to get that stuff done. But that’s exactly what a household robot might be expected to do in the future, so there’s lots of research into how they might do that.

Minecraft is a good place to test such things because it’s a very (very) approximate representation of the real world, with simple and straightforward rules and physics, but it’s also complex and open enough that there’s lots to accomplish or try. Purpose-built simulators are great, too, but they have their own limitations.

MineDojo is a simulation framework built around Minecraft, since you can’t just plonk a random AI in there and expect it to understand what all these blocks and pigs are doing. Its creators (lots of overlap with the Voyager team) put together YouTube videos about the game, transcripts, wiki articles, and a whole lot of Reddit posts from r/minecraft, among other data, so users can create or fine-tune an AI model on them. It also lets those models be evaluated more or less objectively by seeing how well they do things like build a fence around a llama or find and mine a diamond.

Voyager excels at these tasks, performing much better than the only other model that comes close, Auto-GPT. But they have a similar approach: using GPT-4 to write their own code as they go.

Normally you’d just train a model on all that good Minecraft data and hope it would figure out how to fight skeletons when the sun goes down. Voyager, however, starts out relatively naive, and as it encounters things in the game, it has a little internal conversation with GPT-4 about what it ought to do and how.

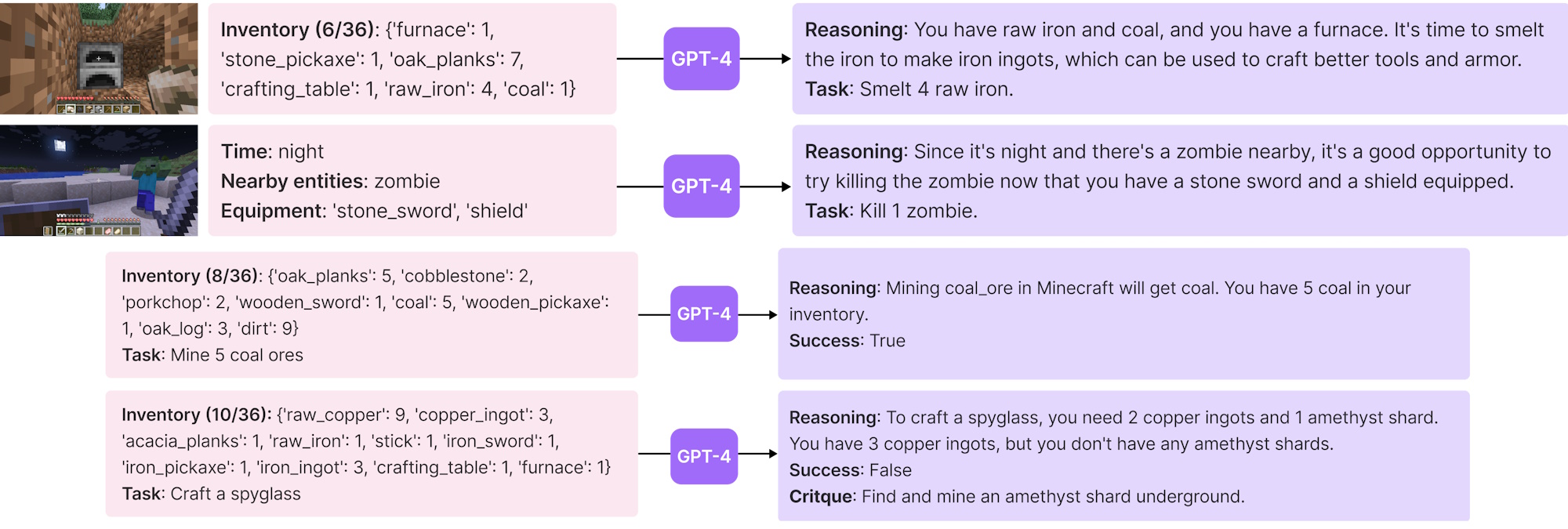

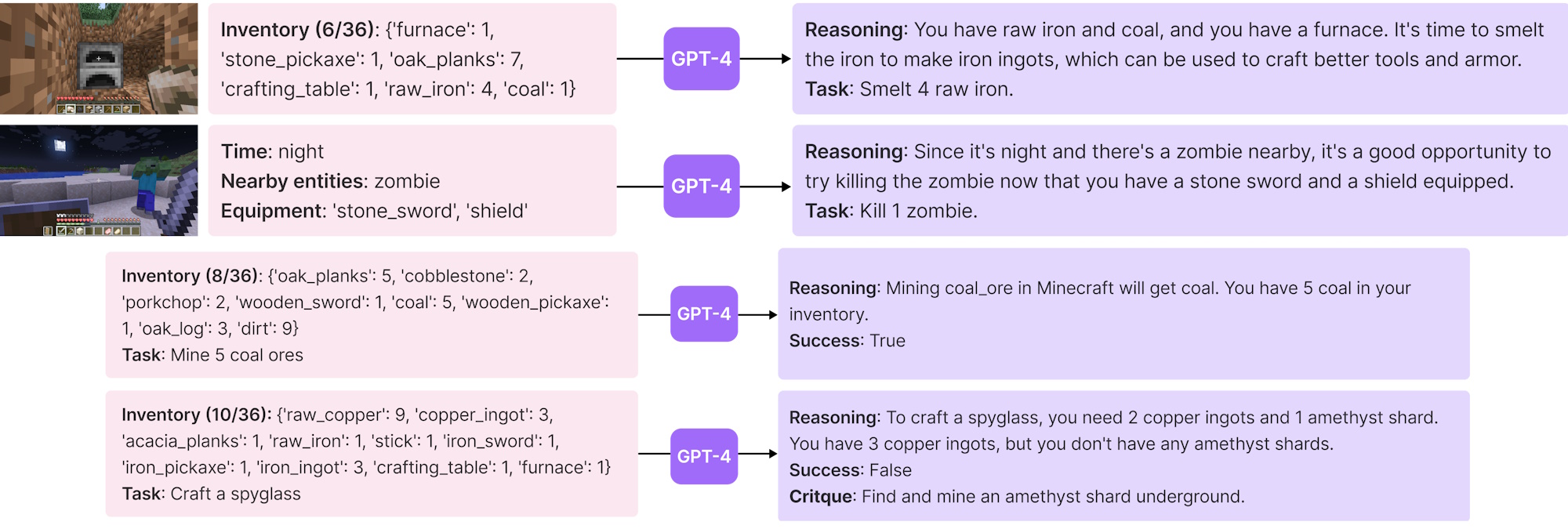

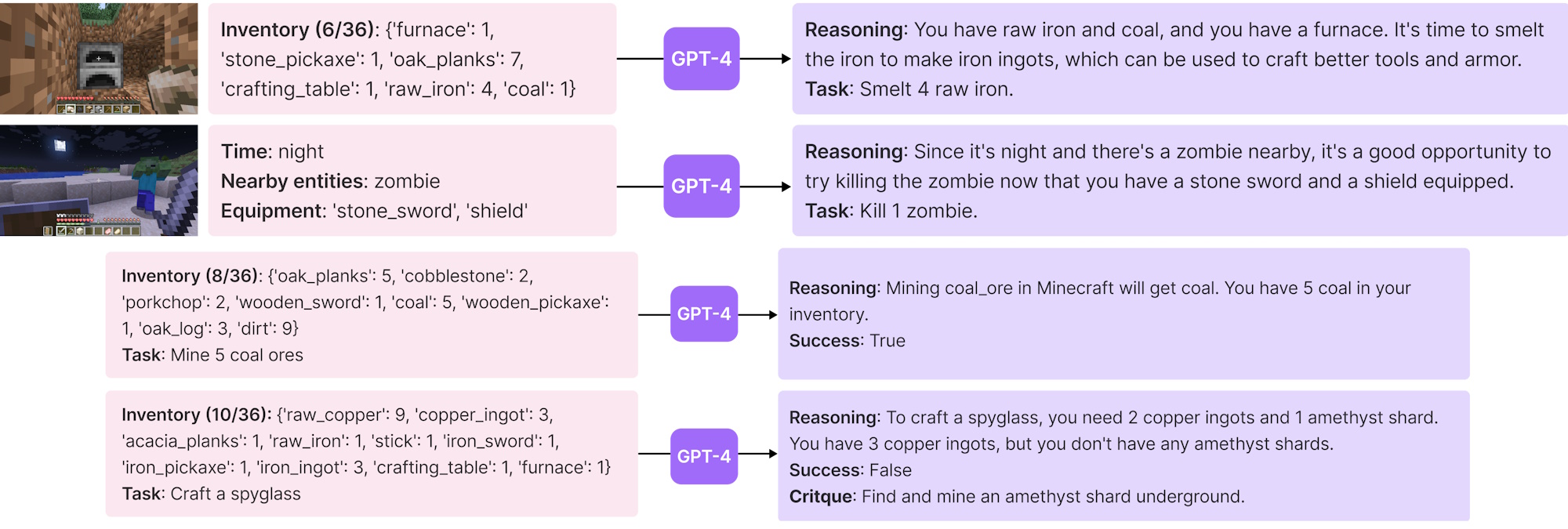

Directing the next action, and adding skills to the pile. Image Credits: MineDojo

For instance, night falls and those skeletons come out. The agent has a general idea of this, but it asks itself, What would a good player of this game do when there are monsters nearby? Well, GPT-4 says, if you want to explore the world safely, you’ll want to make and equip a sword, then whack the skeleton with it while avoiding getting hit. And that general sense of what to do gets translated to concrete goals: collect stone and wood, build a sword at the crafting table, equip it, and fight a skeleton.

Once it’s done those things, they’re entered into a general skill library so that later, when the task is “go deep into a cave to find iron ore,” it doesn’t have to learn to fight again from scratch. It does still use GPT, but it uses the cheaper and faster GPT-3.5, which tells it the skills most relevant to a given situation — so it doesn’t try to mine the skeleton and fight the ore.

It’s similar to an agent like Auto-GPT that, when faced with an interface it doesn’t know yet, has to teach itself to navigate it in order to accomplish its goal. But Minecraft is a much deeper environment than it is used to solving for, so a specialty agent like Voyager does far better. It finds more stuff, learns more skills, and explores a much greater area than the other bots.

Interestingly but perhaps not surprisingly, GPT-4 wipes the floor with GPT-3.5 (i.e., ChatGPT) when it comes to generating useful code. A test replacing the former with the latter had the agent hit a wall early on, perhaps even literally, and fail to improve. It may not be obvious from talking to the two models that one is much smarter, but the truth is you don’t have to be particularly smart to carry on an apparently intelligent conversation (ask me how I know). Coding is much more difficult and GPT-4 was a big update there.

The point of this research isn’t to obsolete Minecraft players but to find methods by which relatively simple AI models can improve themselves based on their “experiences,” for lack of a better word. If we’re going to have robots helping us in our homes, hospitals, and offices, they will need to learn and apply those lessons to future actions.